In the fields of AI training clusters and high-performance computing (HPC), multi-GPU collaboration has become a mainstream trend for scaling computational power. However, as model sizes grow and data throughput requirements continuously increase, traditional interconnect architectures are struggling to handle the communication pressure of "multi-card parallelism."NVIDIA's NVSwitch technology, introduced as the "nerve center," is specifically designed to address the high-speed communication bottleneck between GPUs.

This article will delve into the critical role of this revolutionary technology in GPU interconnection, from NVSwitch's definition, core technologies, and advantageous features to its practical applications.

I. Foreword: Interconnect Bottlenecks as the "Invisible Ceiling" for GPU Cluster Development

In complex computing scenarios such as deep learning, scientific simulation, and real-time inference, although GPU computing performance is strong, if interconnect bandwidth is insufficient or communication latency is too high, multi-GPU systems will be unable to unleash their full potential due to data "traffic jams."

The emergence of NVSwitch is precisely to break through this bottleneck, achieving high-speed, stable, and intelligent interconnection for large-scale GPU systems.

II. Definition of NVSwitch: The New Hub for GPU Communication

● Technical Overview

NVSwitch is a high-speed switching chip designed by NVIDIA specifically for multi-GPU architectures, essentially functioning as an intelligent communication hub. It supports up to 18 NVLink connections, enabling high-speed data flow between GPUs by building a fully connected network topology.

● Core Functions

- Builds a fully connected architecture among multiple GPUs, improving communication efficiency;

- Provides a modular design for easy system expansion and flexible deployment;

- Addresses bottleneck issues in traditional PCIe and chained NVLink interconnections.

● Technical Goals

The ultimate goal of NVSwitch is to eliminate communication bottlenecks, allowing each GPU to access data from other GPUs in the cluster as if accessing local cache, providing a "zero-friction" communication channel for large-scale parallel tasks.

III. Core Technical Features of NVSwitch

1. Ultra-High Bandwidth Transmission Capability

Each NVSwitch module supports 18 NVLink connections, with a total bandwidth of several TB/s per module, building a "data highway" for multi-GPU collaboration.

2. Full Interconnection Capability Between GPUs

NVSwitch breaks the traditional serial connection method by building an all-to-all network structure, allowing each GPU to communicate directly with any other GPU without intermediate hops, significantly enhancing data exchange efficiency within the cluster.

3. Extremely Low Latency Architecture

By optimizing communication protocols and data paths, NVSwitch minimizes data exchange latency, making it highly suitable for AI model training and scientific simulation tasks that demand extreme real-time performance.

4. Strong Modular Scalability

Multiple NVSwitch modules can collaboratively build larger-scale interconnect networks, supporting the deployment of multi-GPU systems ranging from 8 cards to 100+ cards, meeting the horizontal scaling needs of ultra-large models and complex workloads.

5. Intelligent Scheduling and Link Management

NVSwitch features built-in link resource scheduling mechanisms, intelligently allocating communication resources based on workload, improving communication efficiency, and avoiding bottleneck nodes.

IV. Technical Advantages of NVSwitch: Comprehensive Upgrade in Performance, Latency, and Topology

| Comparison Dimension | Traditional PCIe/NVLink Chained Structure | NVSwitch Interconnect Structure |

|---|---|---|

| Communication Bandwidth | Limited, prone to congestion | Several TB/s, high throughput |

| Communication Path | Multi-hop transmission | Fully connected direct access, fewer hops |

| Latency Performance | High latency, more data conflicts | Low latency, higher parallel processing efficiency |

| System Scalability | Difficult to scale, limited topology | Modular design, flexible support for any scale |

| Scheduling Intelligence | Static connection, lacks resource scheduling | Intelligent management of link resources, maximized efficiency |

NVSwitch not only enhances bandwidth and latency performance but also brings structural flexibility and intelligence to the design of multi-GPU systems.

V. Practical Application Scenarios of NVSwitch

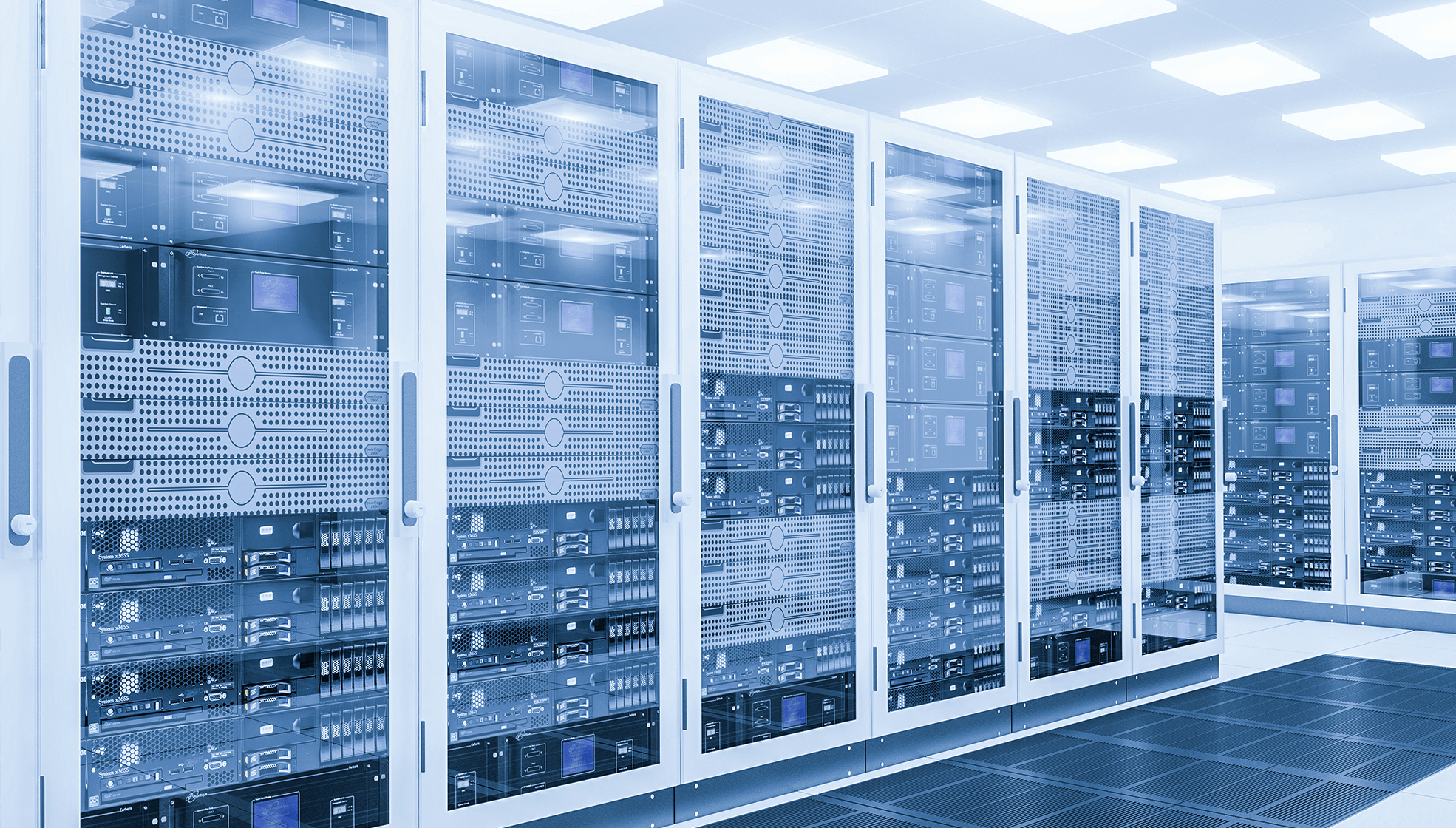

● Data Centers

In AI inference platforms and training clusters, NVSwitch significantly boosts model training efficiency and inference throughput by high-speed connecting multiple GPU nodes, becoming a standard configuration for high-density platforms like NVIDIA DGX systems.

● Supercomputers

In scientific computing tasks such as weather simulation, gene analysis, and materials science, NVSwitch helps build parallel platforms with dozens or even hundreds of GPUs, enhancing the overall system's concurrency capability.

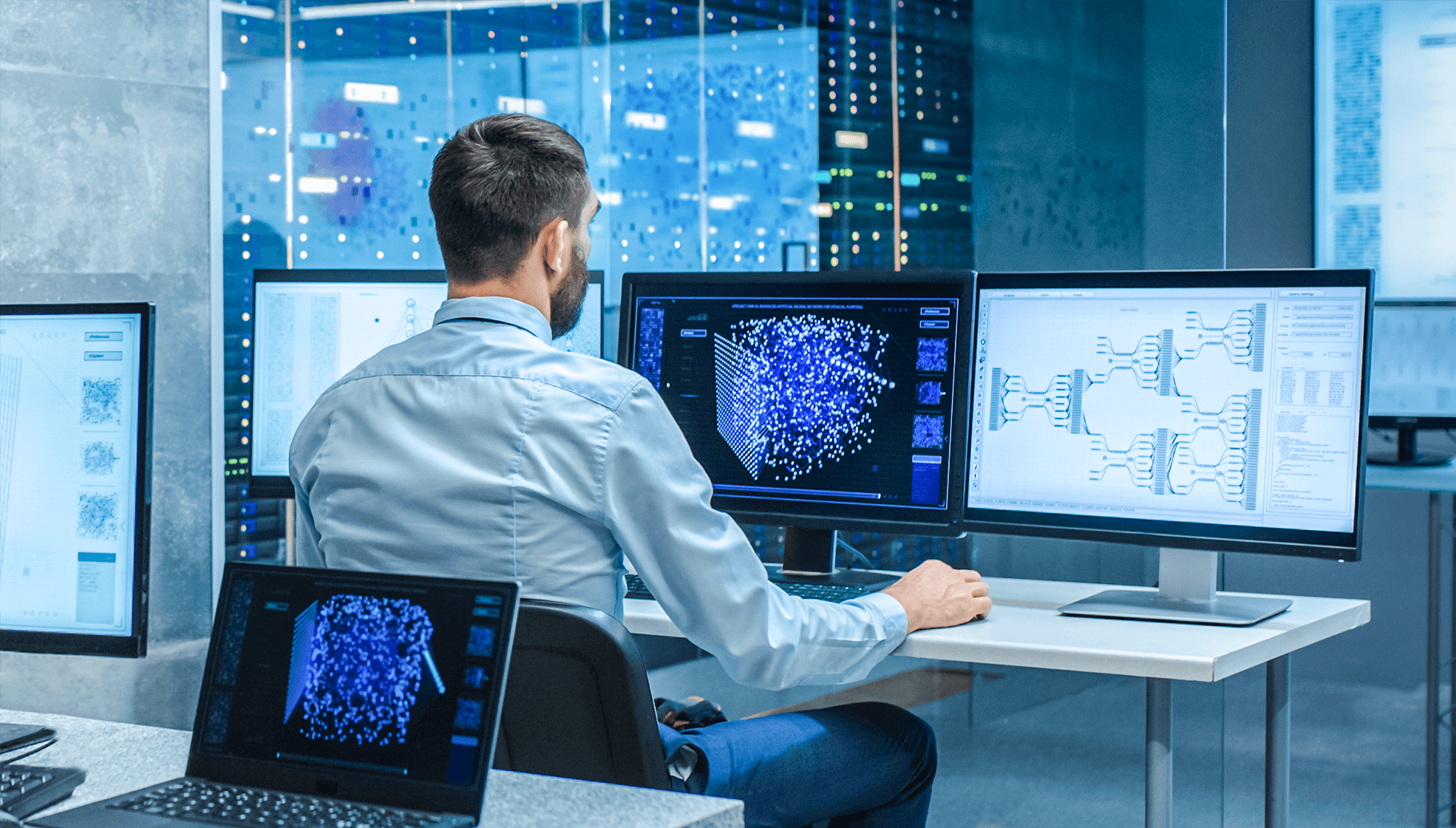

● AI Clusters

When training large models like GPT and BERT, NVSwitch provides efficient inter-GPU data synchronization channels, accelerating distributed training and shortening development cycles.

VI. Conclusion: NVSwitch, the "Neural Hub" of the GPU Era

Core Value

With its core technical advantages of high bandwidth, low latency, full interconnection, and intelligent management, NVSwitch redefines the communication method between GPU clusters, becoming an indispensable communication component in high-performance systems.

Practical Significance

Whether in AI training, scientific simulation, or data center operations, NVSwitch significantly enhances the overall performance and resource utilization efficiency of GPU systems.

Future Outlook

As GPU scale continues to expand, future NVSwitch iterations are expected to support higher link density, greater bandwidth, and more intelligent interconnection strategies, providing continuous power for next-generation AI infrastructure and supercomputing platforms.